AI Voice Cloning: How It Works, Risks & Use Cases

Imagine receiving a phone call from your CEO asking for an urgent bank transfer, or hearing a loved one’s voice asking for help. The voice sounds real, familiar, and emotionally convincing. But what if that voice was generated by AI?

This is no longer science fiction. AI voice cloning has rapidly evolved into one of the most powerful and controversial branches of artificial intelligence. It enables machines to replicate a human voice with astonishing accuracy, sometimes using only a few seconds of audio. While the technology unlocks enormous value for businesses, creators, and accessibility, it also introduces serious ethical, security, and legal risks.

In this in-depth guide, we explore how AI voice cloning works, the technology behind it, its real-world use cases, and the risks you must understand before adopting it. The goal is simple: help you make informed, responsible decisions in an era where voice is becoming a digital asset.

What Is AI Voice Cloning?

AI Voice Cloning Explained Simply

AI voice cloning is a subset of AI voice synthesis that allows a machine learning model to replicate a specific human voice. Unlike traditional text-to-speech systems that generate generic voices, voice cloning focuses on mimicking the unique characteristics of a real speaker.

To clarify the differences:

- Text-to-Speech (TTS): Converts text into spoken audio using pre-built, generic voices.

- Voice Synthesis: Generates artificial voices that may sound human but are not based on a real person.

- AI Voice Cloning: Recreates the tone, pitch, rhythm, and emotional nuances of a specific individual.

Modern AI voice cloning systems rely on deep learning models trained on real voice data. The result is speech that can sound indistinguishable from the original speaker, especially to untrained listeners.

Human Voice as Data

From an AI perspective, the human voice is a complex biometric signal. It contains layers of information beyond words:

- Pitch and frequency patterns

- Accent and pronunciation habits

- Speech rhythm and pauses

- Emotional expression and intonation

This is why voices are increasingly treated as biometric identifiers, similar to fingerprints or facial features. According to research published by the World Economic Forum, voice-based biometrics are among the fastest-growing authentication methods, precisely because voices are unique and difficult to consciously alter.

AI voice cloning leverages this uniqueness, turning voice into trainable data that can be stored, replicated, and synthesized on demand.

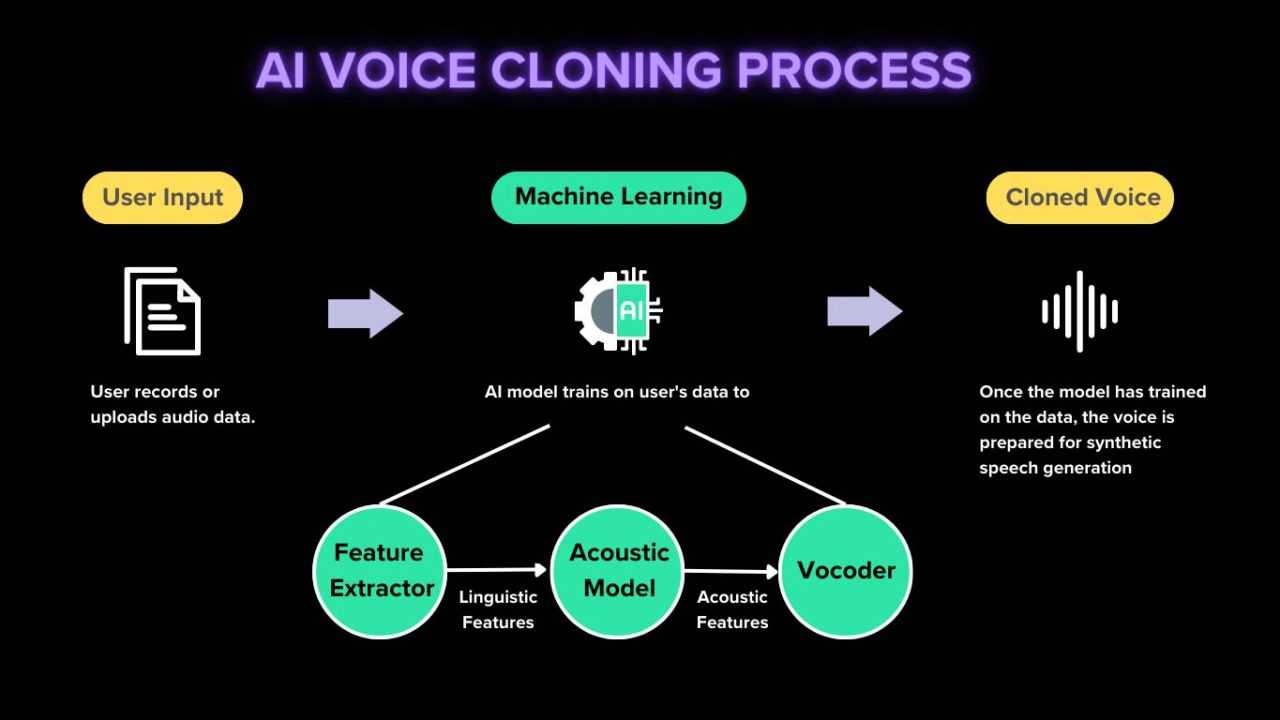

How AI Voice Cloning Works (Step-by-Step)

Although the output feels almost magical, AI voice cloning follows a structured technical process rooted in machine learning and signal processing.

Step 1: Voice Data Collection

The first requirement is voice data. This can range from:

- As little as 10–30 seconds for instant voice cloning

- Several minutes or hours for professional-grade cloning

High-quality datasets usually include:

- Clear recordings with minimal background noise

- Multiple speaking styles and emotional tones

- Different sentence structures and phonetic coverage

From an ethical standpoint, reputable AI providers require explicit consent from the voice owner. This consent is critical, as unauthorized voice data collection is one of the biggest legal risks in AI voice cloning today.

Step 2: Feature Extraction

Once the audio is collected, the system does not store it as raw sound alone. Instead, AI models analyze and extract features such as:

- Phonemes (basic sound units)

- Prosody (rhythm, stress, and intonation)

- Pitch contours and frequency patterns

These features are converted into mathematical representations known as speaker embeddings. Think of embeddings as a digital fingerprint of a voice, capturing what makes it unique while abstracting away the actual words spoken.

Key Technologies Involved

Modern voice cloning systems rely on advanced neural architectures, including:

- Deep Neural Networks (DNNs) for pattern recognition

- Transformer models for contextual understanding

- Speech synthesis frameworks such as Tacotron, WaveNet, and VITS

Google DeepMind’s WaveNet, for example, was a major breakthrough that demonstrated how neural networks could generate raw audio waveforms with human-like quality. Many commercial systems today build upon similar foundations.

Step 3: Model Training

During training, the AI model learns to map text inputs to audio outputs that match the target voice. Depending on the system, training can be:

- Supervised: Using labeled voice-text pairs

- Self-supervised: Learning patterns without explicit labels

High-end systems aim for voice generalization, meaning the AI can generate speech the original speaker never recorded, while still sounding authentic. This is what allows cloned voices to read entirely new scripts or languages.

Step 4: Voice Synthesis & Output

Once trained, the model can generate speech from any text input. Advanced platforms allow users to control:

- Speaking speed and emphasis

- Emotional tone (calm, excited, serious)

- Multilingual pronunciation

At this stage, the cloned voice can be deployed across applications such as videos, virtual assistants, call centers, or interactive media, often via APIs or web dashboards.

Types of AI Voice Cloning

Instant Voice Cloning

Instant voice cloning systems are designed for speed and convenience. They typically require only a short audio sample and can generate a usable voice model within minutes.

Pros:

- Fast setup

- Low technical barrier

- Ideal for demos and experimentation

Cons:

- Lower accuracy

- Limited emotional range

- Higher risk of artifacts

These systems are popular among content creators and early-stage projects but are rarely used for high-stakes enterprise applications.

Professional / High-Fidelity Voice Cloning

Professional voice cloning uses extensive, studio-quality datasets and longer training times. The result is near-human realism, suitable for commercial and enterprise use.

Common applications include branded voice assistants, audiobooks, and customer service automation. Companies investing in this level of cloning often treat voice models as proprietary intellectual property.

Real-Time Voice Conversion

Real-time voice conversion is a specialized form of AI voice cloning that transforms a speaker’s voice instantly during live interactions. Unlike traditional cloning, which generates audio from text, this method converts one voice into another while preserving the original speech content.

This technology is widely used in:

- Online gaming and virtual worlds

- Live streaming and virtual events

- Secure communication and anonymity scenarios

While real-time systems are impressive, they require significant computing power and are more susceptible to latency and audio artifacts. As a result, they are typically deployed by advanced platforms rather than casual users.

Real-World Use Cases of AI Voice Cloning

Marketing & Advertising

In marketing, AI voice cloning allows brands to maintain a consistent “voice identity” across multiple campaigns and languages. Instead of repeatedly hiring voice actors, companies can reuse a licensed brand voice at scale.

Examples include:

- Personalized audio ads based on user behavior

- Localized campaigns without re-recording

- Dynamic voiceovers for social media videos

According to a 2024 report by Deloitte, personalized audio content can increase engagement rates by up to 35%, making voice cloning an attractive tool for performance-driven marketers.

Content Creation & Media

Content creators are among the fastest adopters of AI voice cloning. Podcasters, YouTubers, and audiobook publishers use it to:

- Scale content production

- Maintain consistent narration styles

- Update content without re-recording

A growing trend is “voice continuity,” where creators preserve their voice even when unavailable or after long breaks. This has raised both excitement and ethical debate within the creator economy.

Business & Enterprise Applications

For businesses, voice cloning is reshaping customer communication. AI-powered voice agents can now sound natural, empathetic, and brand-aligned.

Common enterprise use cases include:

- AI customer support representatives

- Interactive voice response (IVR) systems

- Sales and onboarding simulations

Enterprises adopting AI voice cloning often report reduced operational costs and improved customer satisfaction, especially when paired with conversational AI platforms.

Education & E-Learning

In education, voice cloning enhances personalization and accessibility. Instructors can create AI versions of their voices to deliver lessons across multiple formats and languages.

- Personalized tutoring experiences

- Language pronunciation training

- Support for visually impaired learners

UNESCO has highlighted AI-driven accessibility tools as a key factor in closing global education gaps, particularly in remote and multilingual environments.

Entertainment & Gaming

Game studios and entertainment companies use AI voice cloning to generate dynamic dialogue at scale. Non-player characters (NPCs) can now speak naturally, respond contextually, and evolve over time.

This reduces production costs while opening new creative possibilities, such as:

- Procedurally generated storylines

- Virtual influencers

- Interactive films and experiences

Personal & Accessibility Use Cases

Beyond business, AI voice cloning offers profound personal value. Individuals with degenerative speech conditions can preserve their voice for future communication.

For accessibility advocates, this is one of the most meaningful applications of the technology, turning AI into a tool for dignity and inclusion rather than convenience alone.

Risks & Ethical Concerns of AI Voice Cloning

Voice Deepfakes & Fraud

The most publicized risk of AI voice cloning is fraud. Criminals have used cloned voices to impersonate executives, family members, and public figures.

Notable risks include:

- CEO fraud and financial scams

- Social engineering attacks

- Manipulated audio evidence

In 2023, Europol warned that AI-generated voice deepfakes could become a dominant tool in cybercrime, urging organizations to strengthen voice verification processes.

Privacy & Consent Issues

Voice data is deeply personal. Using someone’s voice without permission raises serious privacy concerns. Ethical AI providers now emphasize:

- Explicit user consent

- Clear data ownership terms

- Secure storage and deletion policies

From a trust perspective, transparency is non-negotiable. Users must know when they are interacting with a synthetic voice.

Legal & Regulatory Challenges

Globally, regulation has struggled to keep pace with AI voice cloning. Some jurisdictions treat voice as biometric data, while others lack clear definitions.

In Vietnam and many emerging markets, regulatory frameworks are still evolving. Businesses operating internationally must navigate a patchwork of laws related to data protection, intellectual property, and consumer rights.

Ethical AI Standards

Responsible platforms adopt safeguards such as:

- Audio watermarking

- Usage logging and monitoring

- Clear disclosure of AI-generated content

How to Use AI Voice Cloning Safely

Best Practices for Businesses

Organizations should treat voice cloning as a strategic asset, not a novelty. Recommended best practices include:

- Implementing consent-first policies

- Restricting access to voice models

- Training staff on AI-related risks

Security teams should also combine voice verification with secondary authentication methods to prevent fraud.

How to Detect AI-Generated Voices

While detection is challenging, warning signs include unnatural pacing, inconsistent emotion, or lack of background noise. Specialized AI detection tools are emerging, but human awareness remains critical.

Future of AI Voice Cloning

AI and Emotional Intelligence

The next frontier is emotion-aware voice synthesis. Future systems will adapt tone, empathy, and context in real time, blurring the line between human and machine communication.

Regulation & Trust Layers

Expect stronger regulatory oversight, standardized disclosure requirements, and embedded trust layers such as cryptographic audio signatures.

Market Outlook

Analysts predict the global AI voice market will exceed USD 20 billion by 2030, driven by enterprise adoption and multimodal AI systems.

Conclusion: Should You Use AI Voice Cloning?

AI voice cloning is neither inherently good nor bad. It is a powerful tool whose impact depends on how responsibly it is used. For businesses and individuals alike, the key is understanding both its capabilities and its risks.

When deployed ethically, voice cloning can enhance productivity, accessibility, and creativity. When misused, it can erode trust and security.

The smartest approach is informed adoption, guided by transparency, consent, and robust safeguards.

Frequently Asked Questions (FAQ)

Is AI voice cloning legal?

Legality depends on jurisdiction and consent. Using a voice without permission is increasingly restricted under data protection and biometric laws.

How much data is needed to clone a voice?

It ranges from seconds for basic cloning to hours for professional-quality results.

Can AI voice cloning be detected?

Detection is improving, but high-quality clones remain difficult to identify without specialized tools.

Explore Trusted AI Voice Solutions

If you are evaluating AI voice cloning tools for business or personal use, explore in-depth reviews, feature comparisons, and transparent pricing on

ai.duythin.digital.

Our platform is built by Vietnam’s leading AI community to help you save time, reduce risk, and make confident AI decisions.