AI Voice Changer API: Integrate Real-Time Voice AI for Scalable Applications

Voice is becoming the new interface of the digital world. From virtual assistants and online games to call centers and content creation, how we sound now matters as much as what we say. However, building realistic, flexible, and real-time voice transformation systems from scratch is complex, expensive, and time-consuming. This is where the AI Voice Changer API emerges as a game-changing solution.

By leveraging advanced deep learning models, an AI Voice Changer API allows developers and businesses to transform voices instantly, clone unique vocal identities, and deploy voice AI at scale without reinventing the wheel. In this in-depth guide, we explore how real-time voice AI works, why it matters, and how organizations can integrate it effectively to gain a competitive edge.

What Is an AI Voice Changer API?

Definition of an AI Voice Changer API

An AI Voice Changer API is a software interface that enables applications to modify, transform, or clone human voices using artificial intelligence. Unlike traditional audio effects, these APIs use neural networks trained on massive speech datasets to analyze vocal characteristics such as pitch, timbre, tone, and speaking style.

Through simple API calls or streaming connections, developers can input live or recorded audio and receive a transformed voice output in real time or near real time. This makes voice transformation accessible not only to AI research labs but also to startups, SaaS platforms, and enterprise systems.

How It Differs from Traditional Voice Changers

Traditional voice changers rely on basic signal processing techniques such as pitch shifting or echo filters. While these effects can alter sound, they often feel artificial and robotic. AI-powered voice changers, by contrast, are trained to understand how real human voices behave.

- Rule-based tools: Modify frequencies without understanding speech context.

- AI voice changers: Reconstruct speech using learned voice patterns.

According to research published by IEEE, neural speech synthesis significantly improves naturalness and speaker similarity compared to conventional digital signal processing methods. This is why modern AI voice APIs sound dramatically more human.

How AI Voice Changer APIs Work

Core Technologies Behind Voice AI

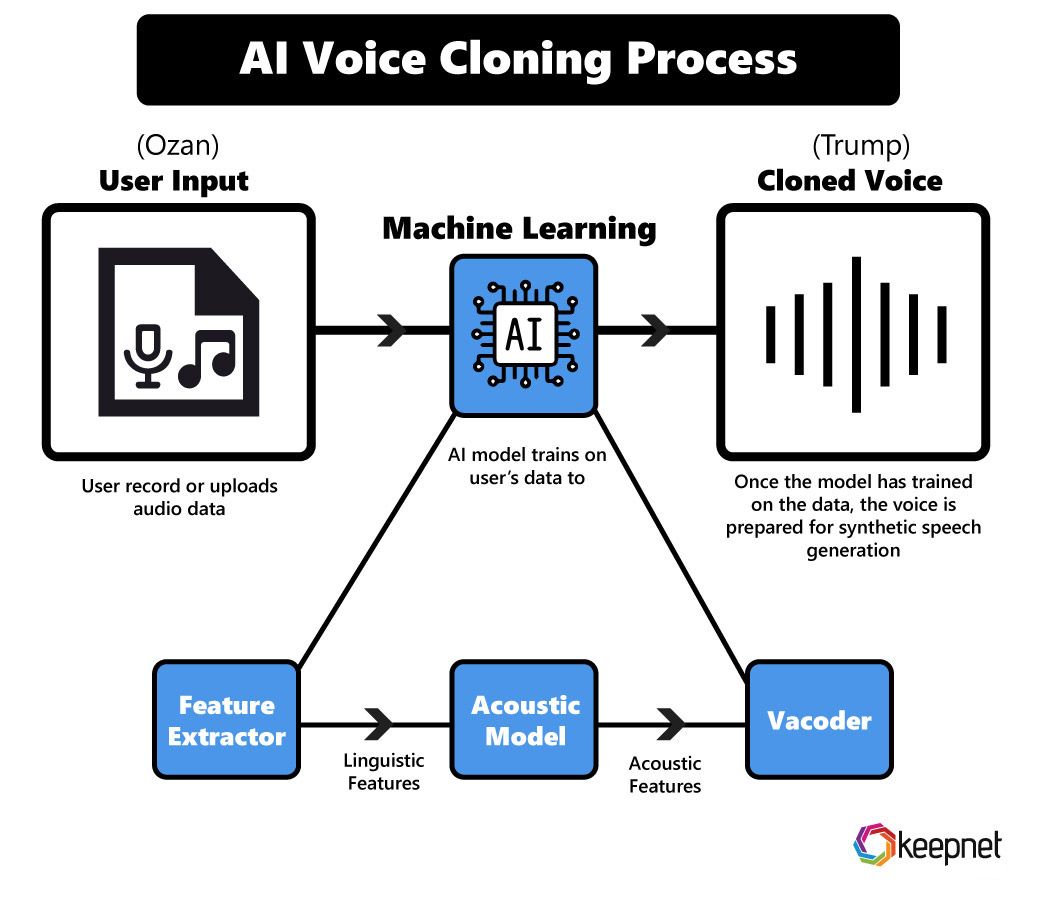

At the heart of any AI Voice Changer API lies a combination of deep learning models, typically based on convolutional neural networks (CNNs), recurrent neural networks (RNNs), or transformers. These models are trained on thousands of hours of speech to learn how voices are structured.

Most modern solutions use a speech-to-speech pipeline rather than converting speech to text and back again. This reduces latency and preserves natural prosody, which is critical for real-time applications.

Audio Input Processing

The process begins with capturing audio input from a microphone or audio stream. This input is segmented into small frames, allowing the AI model to process speech continuously rather than waiting for an entire recording to finish.

Noise reduction and normalization are often applied at this stage to improve output quality, especially in live environments such as online calls or gaming sessions.

AI Voice Transformation Layer

Once audio is preprocessed, the AI model extracts speaker embeddings. These embeddings represent the unique characteristics of a voice. The system then maps the source voice onto a target voice profile, which may be a predefined character, a cloned voice, or a stylized variation.

This is where voice cloning, accent modification, and emotion control occur. Advanced APIs allow fine-grained control over parameters such as speaking speed, emotional intensity, and vocal age.

Real-Time Output Rendering

For real-time voice AI, latency is critical. Most leading AI Voice Changer APIs use streaming protocols such as WebSockets to deliver transformed audio back to the application with minimal delay. Well-optimized systems can achieve end-to-end latency below 300 milliseconds, which feels natural to human listeners.

This capability makes real-time voice AI suitable for live conversations, multiplayer games, and interactive virtual experiences.

Key Features of a Modern AI Voice Changer API

Real-Time Voice Conversion

Real-time processing is the defining feature of a modern AI Voice Changer API. Instead of waiting for a file to upload and process, users experience voice transformation instantly. This is essential for:

- Live streaming and broadcasting

- Online meetings and calls

- Interactive gaming environments

Low latency not only improves user experience but also enables entirely new product categories built around voice interaction.

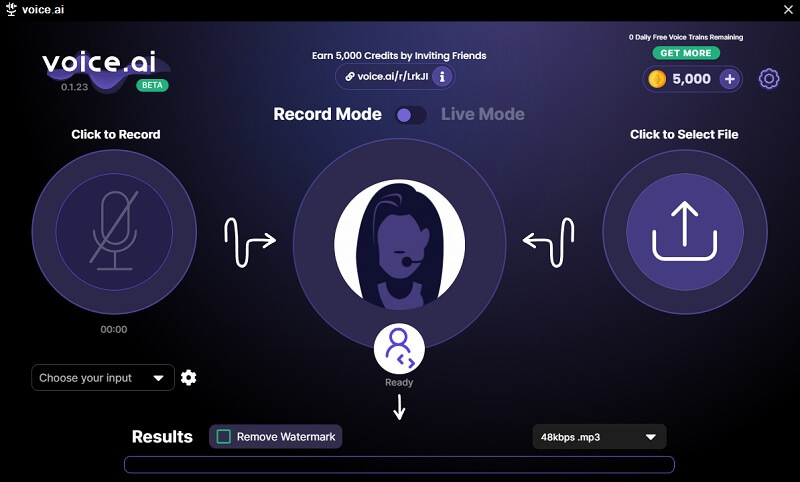

Voice Cloning and Custom Voice Creation

Voice cloning allows businesses to create unique, consistent brand voices or personalized digital personas. With as little as a few minutes of recorded speech, some APIs can generate a highly realistic voice model.

However, reputable providers emphasize ethical usage. Consent verification and secure voice data handling are increasingly standard, reflecting growing regulatory scrutiny around synthetic media.

Multi-Language and Accent Support

Global applications demand global voices. Leading AI voice changer platforms support multiple languages and regional accents, allowing companies to localize user experiences without hiring separate voice actors for each market.

According to a CSA Research study, 76% of consumers prefer to interact with digital products in their native language, making multilingual voice AI a direct driver of engagement and trust.

Emotion and Style Control

Beyond basic voice conversion, advanced APIs enable emotional modulation. Developers can adjust parameters to produce calm, enthusiastic, serious, or empathetic tones, aligning voice output with brand personality or conversational context.

This emotional intelligence is especially valuable in customer service, education, and mental health applications where tone strongly influences user perception.

In the next section, we will explore real-world use cases of AI Voice Changer APIs across industries, followed by a comparison of real-time and non-real-time voice AI solutions.

Top Use Cases for AI Voice Changer APIs

Gaming and Virtual Worlds

In gaming and metaverse environments, immersion is everything. An AI Voice Changer API allows players to adopt character-specific voices in real time, enhancing role-playing experiences and social interaction. Instead of static voice filters, players can dynamically switch between personalities, accents, or even species-specific voices.

Major multiplayer platforms are already experimenting with real-time voice AI to reduce harassment, protect identity, and create safer online communities. By masking real voices while preserving emotional expression, voice AI improves both engagement and moderation.

Content Creation and Media Production

For creators, voice AI dramatically reduces production costs. YouTubers, podcasters, and video editors can generate multiple voice styles without hiring additional voice actors. This is particularly valuable for explainer videos, dubbing, and short-form social media content.

According to a report by PwC, AI-driven media production can reduce content creation costs by up to 30% while increasing output speed. AI Voice Changer APIs make this efficiency accessible to individual creators and small teams.

Customer Support and Call Centers

Call centers face challenges around consistency, privacy, and agent burnout. AI voice changers allow companies to standardize agent voices to match brand identity while masking personal voice data. This protects employee privacy and improves perceived professionalism.

Some enterprises also use real-time voice AI to adjust tone dynamically, ensuring empathetic responses during sensitive customer interactions.

Education and eLearning

In education, voice personalization improves comprehension and engagement. AI voice APIs enable adaptive narrators, multilingual tutors, and accessible learning materials for students with diverse needs.

Research from EdTechX shows that personalized audio learning can improve information retention by up to 25%, especially in language learning environments.

Accessibility and Assistive Technology

For users with speech impairments or those recovering from illness, AI Voice Changer APIs provide a powerful assistive layer. Users can speak naturally while the AI outputs a clearer or personalized voice, restoring confidence and independence.

Real-Time vs Non-Real-Time Voice AI APIs

Real-Time Voice Changer APIs

Real-time APIs are designed for live interactions. They prioritize low latency, streaming support, and continuous audio processing. Common use cases include calls, games, and live broadcasts.

- Latency optimized for live use

- Higher infrastructure demands

- Typically higher cost per minute

Non-Real-Time (Batch) Voice APIs

Non-real-time voice AI processes pre-recorded audio files. While slower, these APIs often deliver higher quality and are more cost-effective for large-scale content production.

Performance Comparison

| Criteria | Real-Time API | Non-Real-Time API |

|---|---|---|

| Latency | Very Low | High |

| Best Use Case | Live interaction | Recorded content |

| Cost Efficiency | Medium | High |

Technical Integration Overview

Common API Architectures

Most AI Voice Changer APIs are offered through REST endpoints for batch processing and WebSocket or gRPC connections for streaming audio. SDKs are often available for popular languages such as JavaScript, Python, and Swift.

Supported Platforms

- Web applications

- Mobile apps (iOS and Android)

- Desktop software

- Cloud-native systems

Typical Integration Flow

- Capture audio input from the user

- Stream audio to the AI Voice Changer API

- Receive transformed voice output

- Play or store the processed audio

Security, Ethics, and Compliance in Voice AI

User Consent and Voice Rights

Ethical AI voice usage starts with consent. Responsible providers require explicit permission before cloning or modifying a person’s voice. This aligns with emerging regulations around synthetic media and deepfake prevention.

Data Privacy and Storage

Enterprise-grade APIs use encryption, access controls, and configurable data retention policies. Compliance with GDPR, ISO 27001, and regional data protection laws is increasingly a baseline requirement.

Preventing Voice Fraud and Misuse

To mitigate misuse, some platforms embed digital watermarks or detection signals into generated audio. These measures help identify AI-generated speech and maintain trust in voice-based systems.

Pricing Models for AI Voice Changer APIs

Pay-As-You-Go

Ideal for startups and experimentation, usage-based pricing charges per second or minute of processed audio.

Subscription Plans

Monthly plans offer predictable costs and are suitable for SaaS platforms with steady usage patterns.

Enterprise Pricing

Custom pricing includes dedicated infrastructure, advanced security, and service-level agreements (SLAs).

How to Choose the Best AI Voice Changer API

Key Evaluation Criteria

- Voice quality and naturalness

- Latency and performance

- Language and accent coverage

- Security and compliance

- Transparent pricing

Common Mistakes to Avoid

Businesses often underestimate infrastructure costs or overlook ethical considerations. Choosing a provider without clear documentation or compliance guarantees can lead to long-term risk.

Why Use AI.DuyThin.Digital for Voice AI Research

AI.DuyThin.Digital is built to help businesses and individuals navigate the rapidly evolving AI landscape. Our platform offers detailed reviews, feature comparisons, and transparent pricing insights curated by Vietnam’s leading AI community.

By centralizing research and real-world evaluations, we help decision-makers save time, reduce uncertainty, and choose AI solutions with confidence.

Future Trends in AI Voice Changer APIs

Ultra-Low Latency Voice AI

Advances in edge computing and model optimization are pushing latency closer to human reaction times, enabling seamless voice interactions.

Emotion-Adaptive Voice Models

Next-generation systems will adjust tone and emotion automatically based on conversation context and user sentiment.

Voice AI as a Platform Service

Voice capabilities are evolving into modular services that integrate with broader AI ecosystems, from analytics to personalization engines.

Conclusion: Is an AI Voice Changer API Right for Your Project?

An AI Voice Changer API is more than a novelty. It is a strategic tool that enables scalable, personalized, and engaging voice experiences across industries. Whether you are building a game, a SaaS platform, or a global customer support system, real-time voice AI can deliver measurable value.

To explore and compare the best AI voice solutions for your needs, visit AI.DuyThin.Digital and make informed decisions backed by expert analysis and community insight.

Frequently Asked Questions (FAQ)

Is using an AI Voice Changer API legal?

Yes, when used with proper consent and in compliance with local regulations. Always choose providers with strong ethical guidelines.

How accurate is real-time voice cloning?

Accuracy depends on training data quality and model sophistication. Leading APIs achieve high speaker similarity with minimal samples.

Can AI Voice Changer APIs scale for enterprise use?

Enterprise-grade solutions are designed for high concurrency, reliability, and global deployment.

What skills are required to integrate a voice AI API?

Basic programming knowledge and familiarity with APIs are sufficient. Most providers offer documentation and SDKs to simplify integration.

Author: AI Research Team at AI.DuyThin.Digital – reviewing and comparing AI solutions to help businesses and creators make smarter decisions.