Is AI Voice Changer Safe? Privacy & Security Explained

AI voice changers are no longer niche tools used only by audio engineers or gamers. Today, they power marketing campaigns, customer service bots, podcasts, video dubbing, and even internal business communications. With just a few clicks, anyone can transform their voice or clone a realistic synthetic version. But behind this convenience lies a growing concern that many users are now asking: Is an AI voice changer safe?

As AI voice technology becomes more accessible, so do the risks related to privacy, data security, and misuse. Voice is not just sound; it is biometric data tied to identity, trust, and reputation. In this article, we take a clear, expert-driven look at how AI voice changers work, why safety matters, and what real privacy risks users should understand before adopting these tools for personal or business use.

What Is an AI Voice Changer and How Does It Work?

Definition of an AI Voice Changer

An AI voice changer is a software tool that uses artificial intelligence to modify, synthesize, or replicate human voices. Unlike traditional voice effects that simply adjust pitch or speed, modern AI voice changers rely on machine learning models trained on large datasets of human speech. This allows them to generate voices that sound natural, expressive, and emotionally realistic.

These tools are commonly used for content creation, entertainment, accessibility, language localization, and increasingly, business automation.

AI Voice Cloning vs Real-Time Voice Changing

Not all AI voice changers work the same way. Understanding the difference is essential for evaluating safety.

- Real-time voice changers modify your voice instantly during calls, streams, or recordings.

- AI voice cloning tools create a digital voice model that can generate speech independently of the original speaker.

Voice cloning typically requires uploading voice samples, which raises higher privacy and security concerns compared to real-time modulation tools.

Machine Learning and Voice Synthesis

AI voice changers are powered by deep learning techniques such as neural networks and speech synthesis models. These systems analyze vocal characteristics including tone, pitch, cadence, accent, and emotional inflection. The more data they receive, the more accurate the generated voice becomes.

This dependence on data is exactly why privacy questions matter. Voice data, once collected, can potentially be stored, reused, or exposed if not properly protected.

Cloud-Based vs Local Processing

From a security standpoint, where processing happens is critical:

- Cloud-based AI voice changers send voice data to remote servers for processing.

- Local or on-device tools process voice data directly on your computer.

Cloud solutions offer better performance and scalability but introduce higher risks related to data transmission, storage, and third-party access. Local tools generally provide better privacy control but may lack advanced features.

Why AI Voice Changer Safety Is a Growing Concern

Rapid Growth of AI Voice Technology

The global AI voice market has grown rapidly in recent years, driven by demand for virtual assistants, voiceovers, and automated customer support. According to industry research, synthetic voice technology adoption has increased significantly across media, marketing, and enterprise environments.

With this growth comes wider exposure to potential misuse, especially as tools become easier for non-experts to use.

The Rise of Voice Deepfakes and Audio Fraud

One of the biggest safety concerns surrounding AI voice changers is voice deepfake abuse. Fraudsters have already used cloned voices to impersonate executives, family members, and public figures.

In several documented cases, AI-generated voices were used to authorize fraudulent financial transfers or manipulate employees into revealing sensitive information. These incidents highlight that AI voice security risks are not theoretical; they are already happening.

Regulatory and Public Scrutiny

Governments and regulatory bodies are paying closer attention to AI-generated media. Voice cloning now sits at the intersection of data protection, identity theft, and consumer protection laws.

As awareness grows, users are increasingly cautious and want clear answers about how their data is handled, stored, and protected.

Privacy Risks of AI Voice Changers

What Data Do AI Voice Changers Collect?

To function effectively, most AI voice changers collect some form of user data. This may include:

- Voice recordings or voice samples

- Usage logs and timestamps

- Device information and IP addresses

- Account-related metadata

Voice data is particularly sensitive because it can be considered biometric information, similar to fingerprints or facial recognition data.

Can AI Voice Changers Store or Reuse Your Voice?

This is one of the most important questions users should ask. Some AI voice platforms explicitly state that uploaded voice samples may be retained to improve their models. Others allow users to opt out of data reuse, while less transparent tools provide little clarity at all.

From a privacy standpoint, lack of clear data retention policies is a red flag. Once a voice model is trained, it may be difficult to fully delete or revoke.

Risks of Data Leakage and Unauthorized Access

Cloud-based AI voice changers face risks similar to other online services:

- Data breaches exposing stored voice recordings

- Unauthorized internal access by staff or contractors

- Third-party integrations that expand the attack surface

If voice data is compromised, the impact can be severe, especially if the voice can be used to impersonate the user elsewhere.

Security Risks You Should Be Aware Of

Voice Spoofing and Identity Theft

AI voice changers can be misused to spoof identities. When combined with publicly available audio from social media or videos, attackers can generate convincing fake voices. This creates serious risks for:

- Financial fraud

- Corporate espionage

- Social engineering attacks

Security experts increasingly warn organizations to avoid using voice alone as a verification method.

Malware and Unsafe AI Voice Applications

Not all AI voice tools are legitimate. Some free or unofficial downloads may contain malware, spyware, or hidden data collection mechanisms. Users who install voice changer software without proper verification risk exposing their devices and networks.

Common warning signs include excessive permission requests, unclear company information, and lack of security documentation.

API and Cloud Infrastructure Vulnerabilities

AI voice platforms often rely on APIs and cloud infrastructure. Poorly secured APIs, weak authentication, or lack of encryption can create vulnerabilities that attackers may exploit.

Reputable providers invest heavily in encryption, access controls, and regular security audits. Tools that do not clearly communicate these measures should be approached with caution.

Legal and Ethical Considerations of AI Voice Changers

Is Using an AI Voice Changer Legal?

The legality of using an AI voice changer depends largely on how and where it is used. In most countries, using an AI voice changer for personal entertainment, accessibility, or creative projects is legal. However, problems arise when the technology is used to deceive, impersonate, or profit from someone else’s identity without consent.

Many jurisdictions are now extending existing laws on fraud, identity theft, and data protection to cover AI-generated voices. In business contexts, unauthorized voice cloning can violate consumer protection laws and advertising regulations.

Consent and Voice Ownership

Voice ownership is a complex legal and ethical issue. While your voice is part of your personal identity, the legal definition of who owns a digital voice model remains unclear in many regions.

Ethically, experts agree on one principle: explicit consent is essential. Using someone else’s voice, whether a celebrity or a private individual, without permission raises serious ethical concerns and may expose users and companies to legal risk.

“Voice is biometric data. Treating it casually creates long-term risks for both individuals and organizations.” — AI Ethics Researcher, University of Oxford

Ethical Use of AI Voice Technology

Responsible AI voice usage goes beyond legality. Ethical best practices include:

- Disclosing when a voice is AI-generated

- Avoiding deceptive or manipulative applications

- Respecting cultural and personal identity boundaries

- Implementing safeguards against misuse

As AI adoption grows, ethical transparency is becoming a competitive advantage rather than a limitation.

How to Identify a Safe AI Voice Changer

Essential Security and Privacy Features

A safe AI voice changer should clearly demonstrate how it protects users. Key features to look for include:

- End-to-end encryption for voice data

- Clear and accessible privacy policy

- User control over data retention and deletion

- Compliance with data protection regulations such as GDPR

Transparency is often the strongest indicator of trustworthiness.

Red Flags That Signal Potential Risk

Users should be cautious if an AI voice changer shows any of the following warning signs:

- No information about the company behind the product

- Vague or missing terms of service

- Mandatory voice data storage without opt-out

- Requests for unnecessary device permissions

In security, what a company does not disclose can be as important as what it does.

Questions to Ask Before Using an AI Voice Tool

Before uploading your voice, ask:

- Is my voice data deleted after use?

- Can my data be used to train AI models?

- Who has access to stored recordings?

- What happens if the service shuts down?

Responsible providers answer these questions clearly and directly.

Are AI Voice Changers Safe for Business Use?

Marketing, Media, and Advertising

Businesses increasingly use AI voice changers for ads, explainer videos, and multilingual campaigns. When used responsibly, these tools can save time and reduce costs. However, misuse can quickly damage brand trust.

Brands should ensure voice assets are licensed, transparent, and compliant with local advertising regulations.

Customer Service and Call Automation

AI-generated voices are common in IVR systems and chatbots. While efficient, they also raise privacy concerns, especially when handling sensitive customer data.

Enterprises should prioritize:

- Secure data handling practices

- Access controls and audit logs

- Clear disclosure that customers are interacting with AI

Internal Enterprise Applications

For internal use, such as training or workflow automation, AI voice changers are generally safer if deployed within controlled environments. Enterprise-grade solutions often include stronger security measures than consumer tools.

Best Practices to Protect Your Privacy When Using AI Voice Changers

Choose Trusted Platforms

Selecting reputable AI providers is the first line of defense. Platforms that publish independent reviews, security certifications, and transparent pricing are typically more reliable.

Avoid Sharing Sensitive Voice Data

If possible, avoid uploading voices tied to financial authority, executive roles, or personal security. Synthetic or anonymized voices reduce risk.

Read Privacy Policies Carefully

Pay attention to:

- Data retention duration

- Third-party data sharing

- User rights regarding deletion

Use Secure Networks and Devices

Avoid using AI voice tools on public or unsecured networks. Basic cybersecurity hygiene significantly reduces exposure.

The Future of AI Voice Safety and Regulation

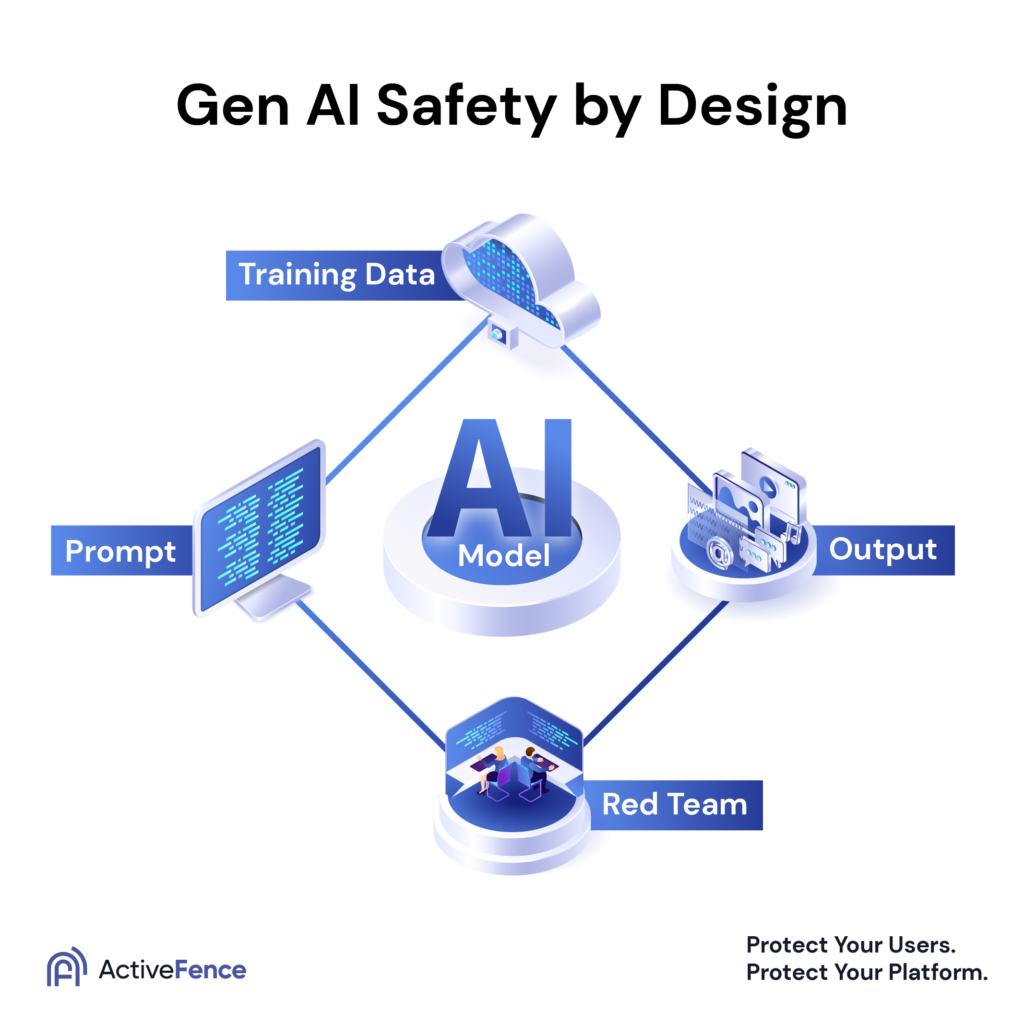

AI Safety by Design

Leading AI researchers advocate for “safety by design,” where privacy and security are built into AI systems from the start rather than added later. Frameworks like AI Safety by Design emphasize minimizing data collection and reducing misuse potential.

Regulatory Trends

Governments worldwide are introducing rules to address deepfakes and AI-generated media. These regulations aim to protect individuals while allowing innovation to continue responsibly.

Advances in Detection and Watermarking

New technologies can detect synthetic voices and embed invisible markers in AI-generated audio. These tools may become essential safeguards in the near future.

Final Verdict: Is AI Voice Changer Safe?

AI voice changers are neither inherently safe nor dangerous. Their safety depends on the provider, the use case, and the user’s awareness. When used responsibly and with trusted platforms, AI voice changers can be powerful tools. When used carelessly, they can create serious privacy and security risks.

The key takeaway is simple: informed choices lead to safer outcomes.

Frequently Asked Questions (FAQ)

Is it safe to upload my voice to an AI voice changer?

It can be safe if the provider clearly explains how your data is stored, used, and deleted. Avoid tools that lack transparency.

Can AI voice changers steal my identity?

The risk exists if your voice data is misused or leaked. This is why strong security practices and trusted platforms matter.

Are AI voice changers legal for business use?

Yes, when used ethically, transparently, and with proper consent. Regulations vary by country.

How can I choose a trustworthy AI voice solution?

Look for expert reviews, clear policies, and independent comparisons from reliable AI communities.

Explore Safe and Trusted AI Solutions

If you want to save time researching AI tools and make informed decisions, explore expert reviews, feature comparisons, and transparent pricing at

ai.duythin.digital.

Our platform helps businesses and individuals choose AI solutions that balance innovation with privacy, security, and trust.