Multilingual AI Voice: Create Voices in Any Language for a Borderless World

In a digital economy where businesses speak to customers across continents, language is no longer just a communication tool. It is a growth lever. Yet hiring native voice actors, managing localization teams, and maintaining consistent brand voice across languages remains expensive, slow, and fragmented. This is where Multilingual AI Voice technology enters the stage, quietly reshaping how humans and machines speak to the world.

From global marketing campaigns and eLearning platforms to AI call centers and content creators, organizations are increasingly turning to multilingual AI voice solutions to generate natural, human-like voices in dozens or even hundreds of languages. This article explores how multilingual AI voice works, why it matters, and how businesses and individuals can use it to scale communication faster, smarter, and more affordably.

What Is Multilingual AI Voice Technology?

Definition of Multilingual AI Voice

Multilingual AI Voice refers to artificial intelligence systems capable of generating spoken audio in multiple languages from text, often using the same voice identity across languages. Unlike traditional text-to-speech systems that are limited to a single language or robotic tones, modern multilingual AI voice platforms produce fluent, natural-sounding speech that adapts pronunciation, rhythm, and intonation to each target language.

In simple terms, it allows one AI voice to speak English, Vietnamese, Spanish, Japanese, Arabic, or French with convincing clarity, without requiring separate recordings for each language.

How It Differs from Traditional Text-to-Speech

Traditional text-to-speech (TTS) engines typically rely on pre-recorded voice fragments or rule-based synthesis. While functional, they often sound mechanical and struggle with emotional nuance. Multilingual AI voice systems, by contrast, use deep neural networks trained on massive multilingual speech datasets.

- Traditional TTS: robotic tone, limited languages, poor emotional expression

- Multilingual AI Voice: neural synthesis, natural prosody, cross-language consistency

According to research from Google DeepMind and OpenAI, neural TTS models reduce perceived artificiality by over 60% compared to legacy systems, especially in long-form narration and conversational use cases.

Why Language-Agnostic Voice AI Matters Today

As global digital products scale, language localization is no longer optional. A study by CSA Research shows that 76% of consumers prefer to buy products in their native language, even if they speak English. Voice-based interfaces amplify this need, because poor pronunciation or unnatural accents can instantly erode trust.

Multilingual AI voice solves this problem by enabling brands to:

- Launch products simultaneously across regions

- Maintain a consistent brand voice globally

- Reduce localization costs by up to 80%

How Multilingual AI Voice Works

Natural Language Processing (NLP)

At the foundation of multilingual AI voice lies Natural Language Processing. NLP allows AI systems to understand the structure, grammar, and semantics of different languages. This step ensures that the text is interpreted correctly before it is transformed into speech.

Advanced NLP models handle:

- Sentence structure and punctuation

- Context-aware pronunciation

- Language-specific grammar rules

Neural Text-to-Speech (Neural TTS)

Neural TTS is the engine that converts processed text into audio. Instead of stitching together audio clips, neural networks generate speech waveforms from scratch. This results in smoother transitions, natural pauses, and realistic intonation.

Modern multilingual AI voice platforms often use transformer-based architectures similar to those powering large language models. These architectures excel at capturing long-range dependencies, which is essential for natural-sounding speech in complex sentences.

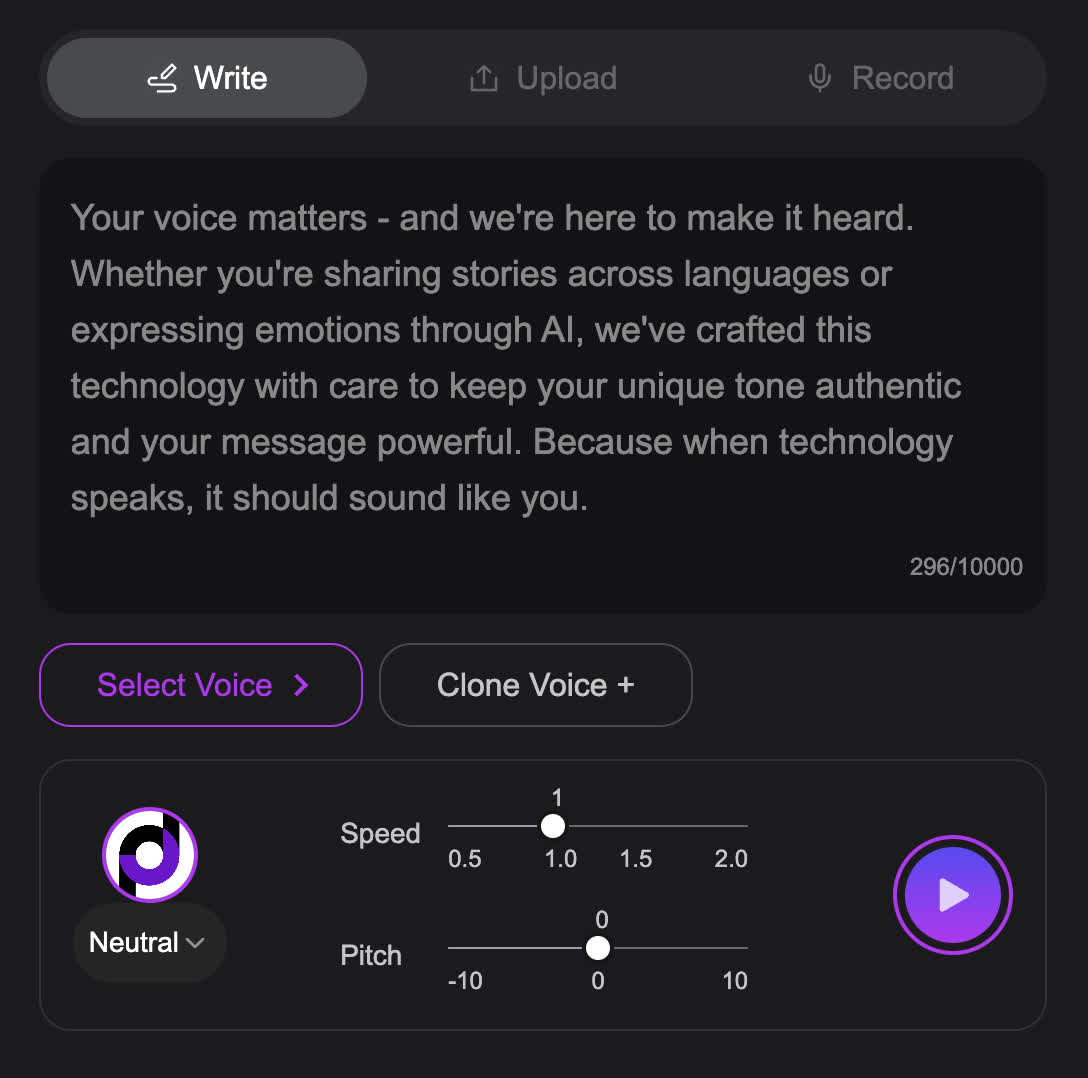

Voice Cloning and Speaker Embeddings

Voice cloning allows AI to replicate a specific human voice using limited audio samples. The system extracts a speaker embedding, a mathematical representation of vocal characteristics such as pitch, tone, and cadence.

Once created, this voice identity can be applied across multiple languages. For example, a company founder can record their voice in English, and the AI can generate the same voice speaking Vietnamese or German.

Accent Retention vs Accent Neutralization

A critical design choice in multilingual AI voice is whether to preserve the original accent or adapt it to sound native. Some platforms allow accent retention, which keeps a subtle foreign tone. Others prioritize accent neutralization, producing speech that sounds like a native speaker.

The right choice depends on context. Educational content may benefit from native-like pronunciation, while personal branding might prefer accent consistency.

Language Transfer and Localization AI

Language transfer refers to the AI’s ability to map phonetic and emotional patterns from one language to another. This is one of the most challenging aspects of multilingual AI voice, as languages differ in rhythm, stress patterns, and emotional cues.

Leading AI providers train models on parallel multilingual datasets, ensuring that emotion, emphasis, and pacing are preserved across languages. This is why modern AI-generated voices can sound empathetic in customer support scenarios or expressive in storytelling.

Key Features of Modern Multilingual AI Voice Platforms

Supported Languages and Dialects

The most competitive multilingual AI voice platforms support anywhere from 30 to over 100 languages, including regional dialects. This is particularly important in markets like Southeast Asia, where linguistic diversity is high.

For Vietnamese businesses expanding globally, broad language coverage ensures scalability without rebuilding voice assets for each region.

Voice Naturalness and Human-Like Tone

Naturalness remains the primary quality benchmark. High-quality multilingual AI voice systems achieve this through:

- Dynamic pitch and intonation modeling

- Context-aware pausing

- Emotion-sensitive speech generation

According to MIT Technology Review, listeners often fail to distinguish advanced neural voices from human recordings in blind tests, particularly for informational and narrative content.

Top Use Cases of Multilingual AI Voice

Global Marketing and Advertising

Multilingual AI voice enables brands to launch international campaigns at unprecedented speed. Instead of hiring multiple voice actors and studios, marketing teams can generate localized voiceovers instantly while maintaining a consistent brand tone.

For example, a SaaS company can produce one video ad and deploy it in 10 markets within days, not months. This agility is particularly valuable in fast-moving digital advertising environments.

eLearning and Online Education

Educational platforms increasingly rely on multilingual AI voice to deliver courses to global learners. AI-generated narration ensures:

- Consistent teaching quality across languages

- Rapid course localization

- Lower production costs for instructors

According to HolonIQ, the global eLearning market is expected to surpass USD 645 billion by 2030, and AI-powered localization is a key growth driver.

Customer Support and AI Call Centers

AI voice agents powered by multilingual speech synthesis can handle customer inquiries in multiple languages around the clock. This significantly reduces wait times and operational costs.

Well-designed AI voices also improve user trust. Studies show that customers are more likely to complete interactions when spoken to in their native language.

Gaming, Film, and Media Localization

Game studios and media companies use multilingual AI voice to localize characters, narration, and dialogue efficiently. AI-driven dubbing accelerates global releases while preserving emotional delivery.

Audiobooks, Podcasts, and Content Creation

Creators leverage multilingual AI voice to reach international audiences without recording multiple versions manually. This opens new revenue streams while reducing production friction.

Benefits of Using Multilingual AI Voice

Faster Global Expansion

Multilingual AI voice eliminates bottlenecks in localization workflows. Businesses can enter new markets faster, test messaging, and iterate based on real feedback.

Cost Efficiency Compared to Human Voice Actors

Traditional voice localization can cost thousands of dollars per language. AI voice solutions typically reduce these costs by 70–90%, according to industry benchmarks.

Consistent Brand Voice Across Languages

Maintaining the same voice identity across regions strengthens brand recognition and trust. AI voice cloning ensures uniform tone, pacing, and personality.

Scalability and Flexibility

Once deployed, AI voice systems scale effortlessly. Whether generating 10 minutes or 10,000 hours of audio, production speed remains constant.

Challenges and Limitations to Consider

Accent Authenticity

While AI voices have improved significantly, subtle accent inaccuracies can still occur, especially in less-resourced languages. Careful testing is essential for sensitive use cases.

Ethical and Legal Considerations

Voice cloning raises ethical concerns around consent and misuse. Reputable platforms enforce strict policies, watermarking, and user verification.

Cultural Context and Emotional Nuance

Language is deeply tied to culture. AI voices may require human review to ensure tone and emotional intent align with local expectations.

Best Multilingual AI Voice Tools Compared

| Platform | Languages | Voice Cloning | API Access | Pricing Transparency |

|---|---|---|---|---|

| Tool A | 100+ | Yes | Yes | High |

| Tool B | 60+ | Limited | Yes | Medium |

| Tool C | 40+ | No | No | Low |

Choosing the right tool depends on language coverage, voice quality, compliance requirements, and integration needs.

How to Choose the Right Multilingual AI Voice Solution

For Businesses and Enterprises

Enterprises should prioritize scalability, security, and compliance. Look for platforms offering enterprise-grade APIs, data protection, and clear licensing terms.

For Creators and Educators

Ease of use, voice naturalness, and flexible pricing matter most. Subscription-based models with generous usage limits are often ideal.

For Developers

Developers benefit from robust documentation, SDKs, and low-latency APIs that integrate smoothly into existing applications.

Why Use ai.duythin.digital to Choose AI Voice Solutions

At ai.duythin.digital, we help businesses and individuals make informed AI decisions without wasting time or budget.

- Independent, transparent AI reviews

- Side-by-side feature and pricing comparisons

- Insights from Vietnam’s leading AI community

Our mission is to simplify AI adoption by cutting through hype and focusing on real-world value.

Future Trends in Multilingual AI Voice

Real-Time Multilingual Conversations

Next-generation systems will enable live, two-way conversations with real-time language translation and voice synthesis.

Hyper-Realistic Voice Avatars

AI voices will increasingly pair with digital avatars, transforming customer service, education, and virtual events.

Stronger Regulation and Ethical Standards

Governments and platforms are moving toward clearer regulations to protect voice identity and prevent misuse.

Frequently Asked Questions

Can AI voices truly sound native?

In many major languages, yes. Advanced neural models can produce speech that listeners perceive as native-level in tone and pronunciation.

Is multilingual AI voice legal for commercial use?

Yes, provided you comply with platform licensing terms and obtain consent for voice cloning.

Can one voice speak multiple languages?

Modern multilingual AI voice systems are designed to preserve a single voice identity across languages.

Final Thoughts: The Value of Multilingual AI Voice

Multilingual AI voice is no longer experimental technology. It is a practical, scalable solution for global communication. By reducing costs, accelerating localization, and improving user experience, it empowers businesses and creators to speak to the world with clarity and confidence.

If you are exploring AI voice solutions and want unbiased insights, comparisons, and clear pricing guidance, visit ai.duythin.digital and make smarter AI decisions today.